Building a Human-in-the-Loop AI Agent in Liferay

The Challenge: The "Human Bottleneck"

In any thriving digital community, content moderation is a double-edged sword. You want vibrant discussions, but you also need to protect users from spam, toxicity, and "bad vibes." Traditionally, this meant a human moderator had to manually review every single comment a process that is slow, expensive, and frankly, exhausting.

The Vision: AI as the First Line of Defense

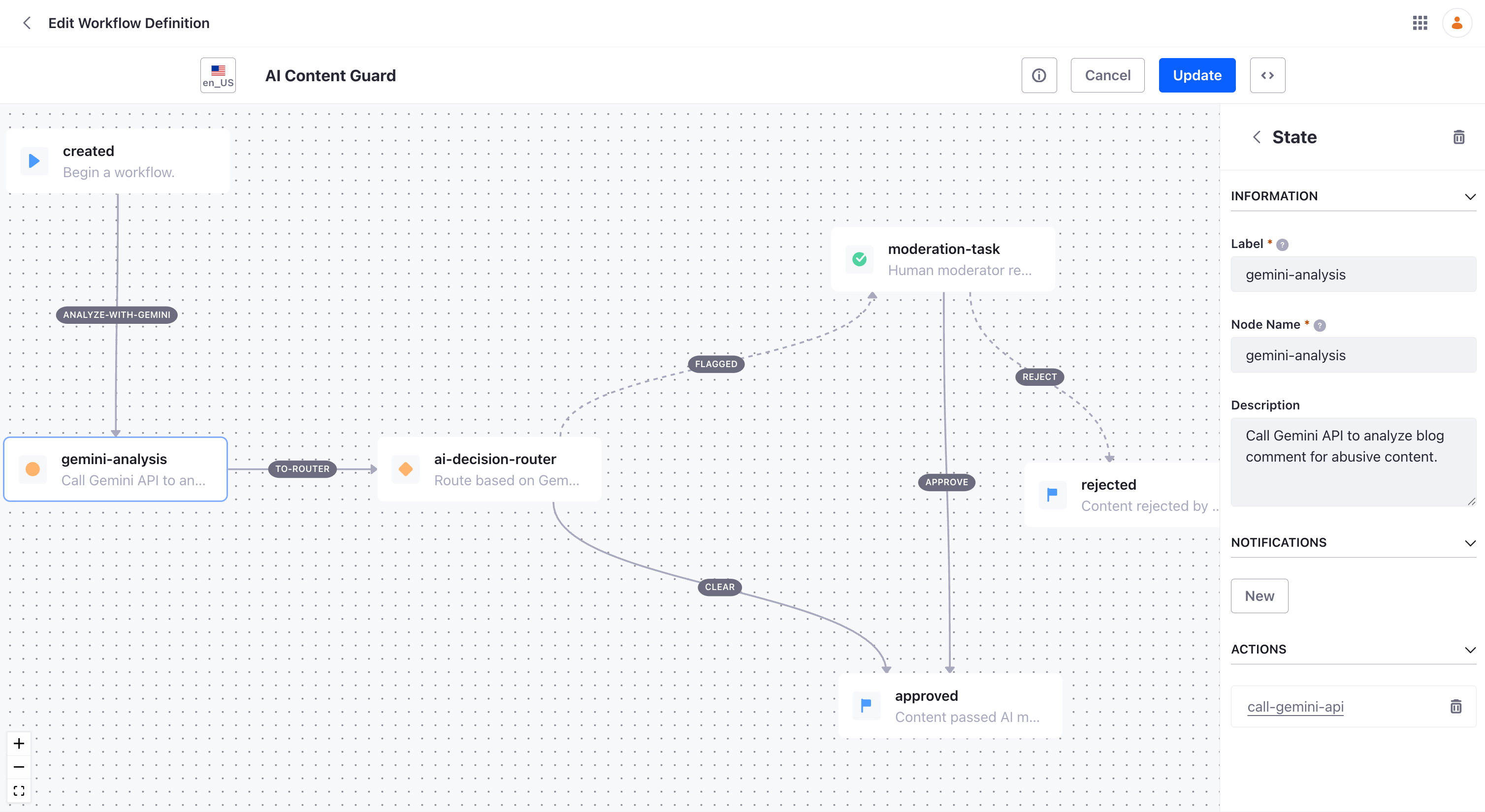

I decided to build a "Smart Gatekeeper" using Liferay’s Kaleo Workflow engine and Google Gemini 2.5 Flash. The goal wasn't to replace humans, but to empower them. We wanted an AI agent that could:

Analyze the intent of a comment instantly.

Flag suspicious content with a specific reason.

Handle Sarcasm: Distinguish between a frustrated user and a toxic one.

The Tech Stack

Building this required stitching together a few key components:

Liferay Workflow (XML): Defining the path from "Submitted" to "Approved."

Groovy Scripting: The engine that "talks" to the outside world.

Gemini API: Our reasoning engine that provides the sentiment analysis.

Testing for Nuance: The Sarcasm Factor

To really test the system, we didn't just use "easy" spam. We threw sarcastic edge-cases at it.

Test: "Oh, perfect. I love it when the button deletes my data. Truly a 10/10 experience."

Result: Gemini successfully identified the irony! Instead of a "dumb" keyword filter seeing "10/10" and "love" and auto-approving it, the AI flagged it for review, noting the user's technical frustration.

The Result: Faster, Safer Communities

Today, our moderators only see what matters. When a task hits their desk, they aren't looking at a blank page they see a note from our AI agent:

"AI Flagged: Potential hostile sarcasm directed at the author."

This "human-in-the-loop" approach ensures we keep the speed of AI with the empathy and judgment of a human.

What’s Next: From Scripting to Scale

While my current POC (Proof of Concept) successfully leverages Liferay Workflow Scripting to bridge the gap between content and AI, I am already looking at the next evolution of this architecture. To build a more maintainable and decoupled system, I plan to migrate this logic to Liferay’s modern extensibility framework:

Liferay AI Hub: Instead of managing raw REST calls and API keys within Groovy scripts, I will leverage the AI Hub. This centralises LLM configurations (like Gemini or Vertex AI), providing a cleaner, more secure way to manage AI interactions across the entire portal.

Client Extensions: To move away from "in-JVM" scripting, I will look Client extensions. This allows us to host the moderation logic in a separate microservice (using any language we prefer). This makes the workflow architecture more resilient, easier to unit test, and significantly simpler to upgrade.

By shifting from internal scripts to these out-of-process extensions, we ensure that our AI moderation remains "low-code" for the workflow designer while staying "high-performance" for the system administrator.